Why Your Developer Vetting Process Is Just Resume Theater

Most companies think they are vetting developers. They are pattern-matching resumes. Here is what real technical screening looks like - and why it determines retention.

Here is a scenario every VP of Engineering has lived through.

You open a role. Applications come in. You screen for keywords. You run a phone screen. You send a technical challenge. You interview the finalist. They are sharp in conversation, solid on paper. You make the offer.

Six months later, you are managing a performance issue.

Not because the developer lied. Not because they are lazy. But because your vetting process measured the wrong things - and you did not find out until it was expensive to find out.

This is the dirty secret of technical hiring: most companies think they are vetting developers. They are actually pattern-matching resumes.

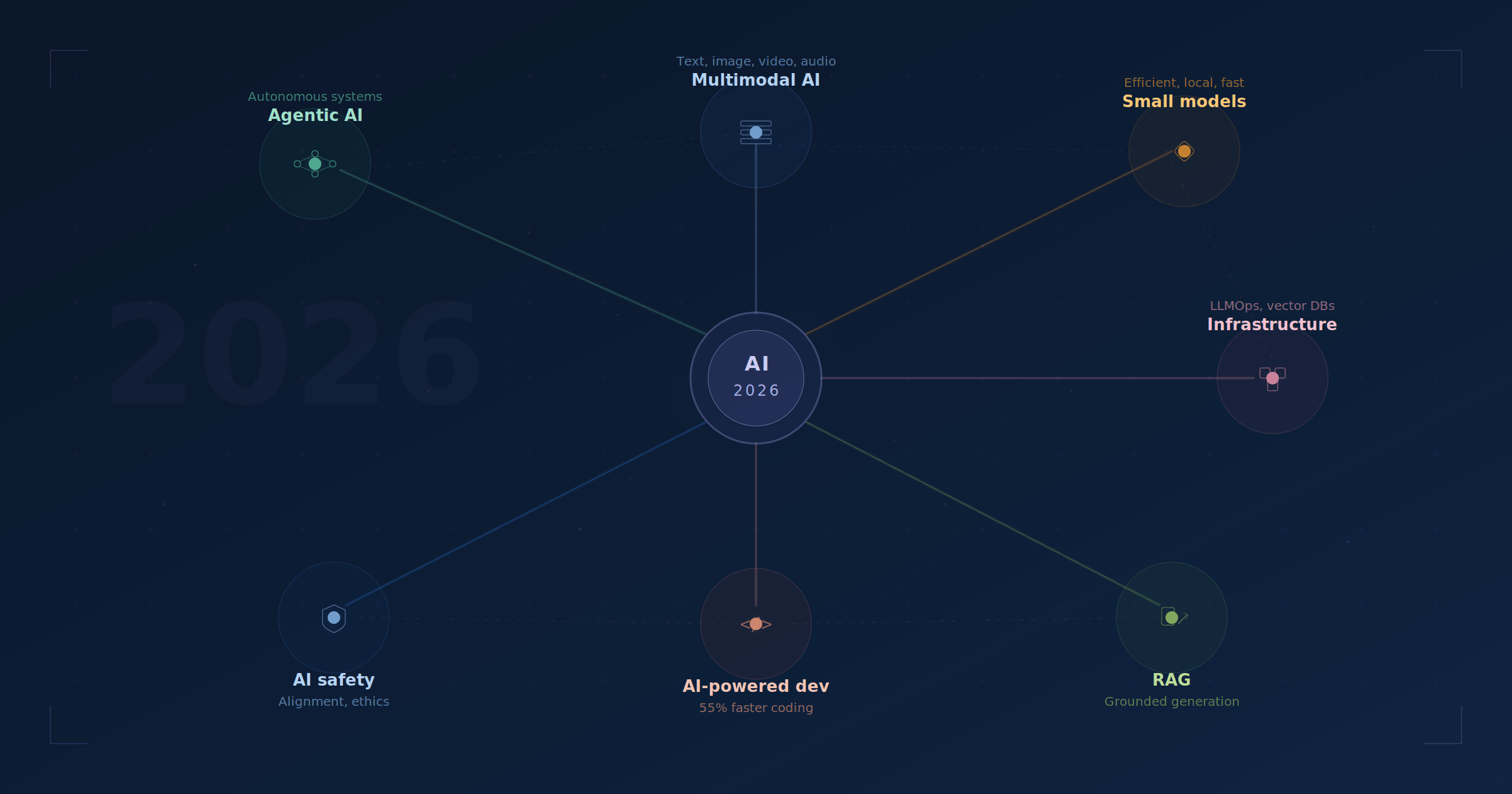

Why AI Screening Makes This Problem Worse

AI resume screening tools are everywhere right now, and they are genuinely useful for one thing: filtering by keywords.

They are not useful for evaluating judgment. Communication under pressure. The ability to debug a production incident and document what broke. Whether someone is still growing three years in, or running on autopilot since their last job.

The problem is not the tool. It is what the tool optimizes for.

Keywords tell you where someone worked and what technologies they listed. They do not tell you what they actually shipped, whether it held up in production, or whether they learned anything when it did not.

Research from Stack Overflow found that 37% of employers said they preferred AI tools over recent grads for routine coding tasks in 2025 - but the engineering teams reporting the highest churn were also the ones leaning hardest on automated screening. When you optimize for volume, you get volume. Quality is a different filter.

The cost of getting it wrong is not small. Replacing a mid-senior developer runs roughly $150,000 when you account for the full cycle: recruiter fees, onboarding time, productivity drag on the team covering the gap, and the ramp back up with the next hire. Most companies absorb this loss quietly and call it the cost of hiring. It is actually the cost of vetting badly.

What Real Technical Screening Actually Measures

There is one interview question that tells me more than a two-hour whiteboard session.

“Walk me through a production problem you had to debug recently. What tools did you use? What did you learn?”

It is not a trick. It is not designed to catch anyone. But it immediately surfaces three things.

First: has this person actually shipped code that ran in production? If they hesitate, pivot to theoretical scenarios, or describe something that sounds like a local development environment, you know exactly where they are in their career. That is information, not a disqualifier - but you need it.

Second: how do they think under pressure? Do they describe a methodical process, or is it a blur of random things they tried until something worked? Do they take ownership of the investigation, or do they externalize - the environment was flaky, someone else wrote that code? Can they explain the root cause to someone who was not there?

Third: what is their tool fluency? A developer who says “I added some logs and eventually found it” is at a different level than someone who talks about distributed tracing, structured log aggregation, database query analysis, or profiling tools. Neither answer is automatically wrong. The gap is real, and most interview processes miss it entirely.

The developers who answer this question best always close the same way: “here is what I changed in my process after that.” They see production failures as education. They are still learning from things that happened months ago. That is the profile that sticks around. That is the profile that makes your team measurably better.

That profile does not reliably appear in a keyword-screened resume.

The Technical Founder Difference

Most recruiting firms evaluate candidates the same way. They read the resume, run a behavioral screen, and pass the candidate to the hiring manager for the actual technical assessment. The recruiter is a filter, not an evaluator.

This model exists because most recruiters are not technical. They cannot tell the difference between someone who understood a system and someone who just used it. They cannot evaluate whether a candidate’s approach to a database architecture problem was smart or cargo-culted from Stack Overflow. They are reading for signals they do not know how to interpret.

DecodeTalent was built by someone who builds software.

Shawn Mayzes scaled an engineering team from 10 to 42 developers at Sycle, a company that was acquired for $78M. He runs Jetpack Labs alongside DecodeTalent, a software consultancy where he is actively shipping production code today. When he evaluates a candidate, the conversation is different because he is asking the questions a senior engineer would ask - not the questions a recruiter learned to ask.

That changes what gets through the filter.

The developers who make it through DecodeTalent’s process are not just technically competent. They have demonstrated how they think, how they communicate under pressure, how they handle failure, and whether they are growing. The screening is slower than a keyword filter. It is significantly faster than six months of a bad hire.

And it does not stop at the screening. Every developer placed through DecodeTalent gets access to the Decode Academy - a curriculum covering AI-led development workflows, advanced systems architecture, and engineering best practices. Candidates are not just vetted before they start. They are actively upskilling throughout their placement.

For a hiring manager, this means the developer joining your team is not coasting on their current skills. They are working on the things that will matter in 12 months: how to integrate AI tooling effectively into real codebases, how to architect for scale, how to think about systems that outlive the initial sprint. You are not just getting their current skill level. You are getting their trajectory.

The Only Metric That Proves Vetting Works

The standard recruiter model is structurally misaligned with your interests.

Most placement firms get paid when someone accepts an offer. That is the moment of success in their model. Whether that person is still there in 18 months - and whether your team is better for it - is not their problem.

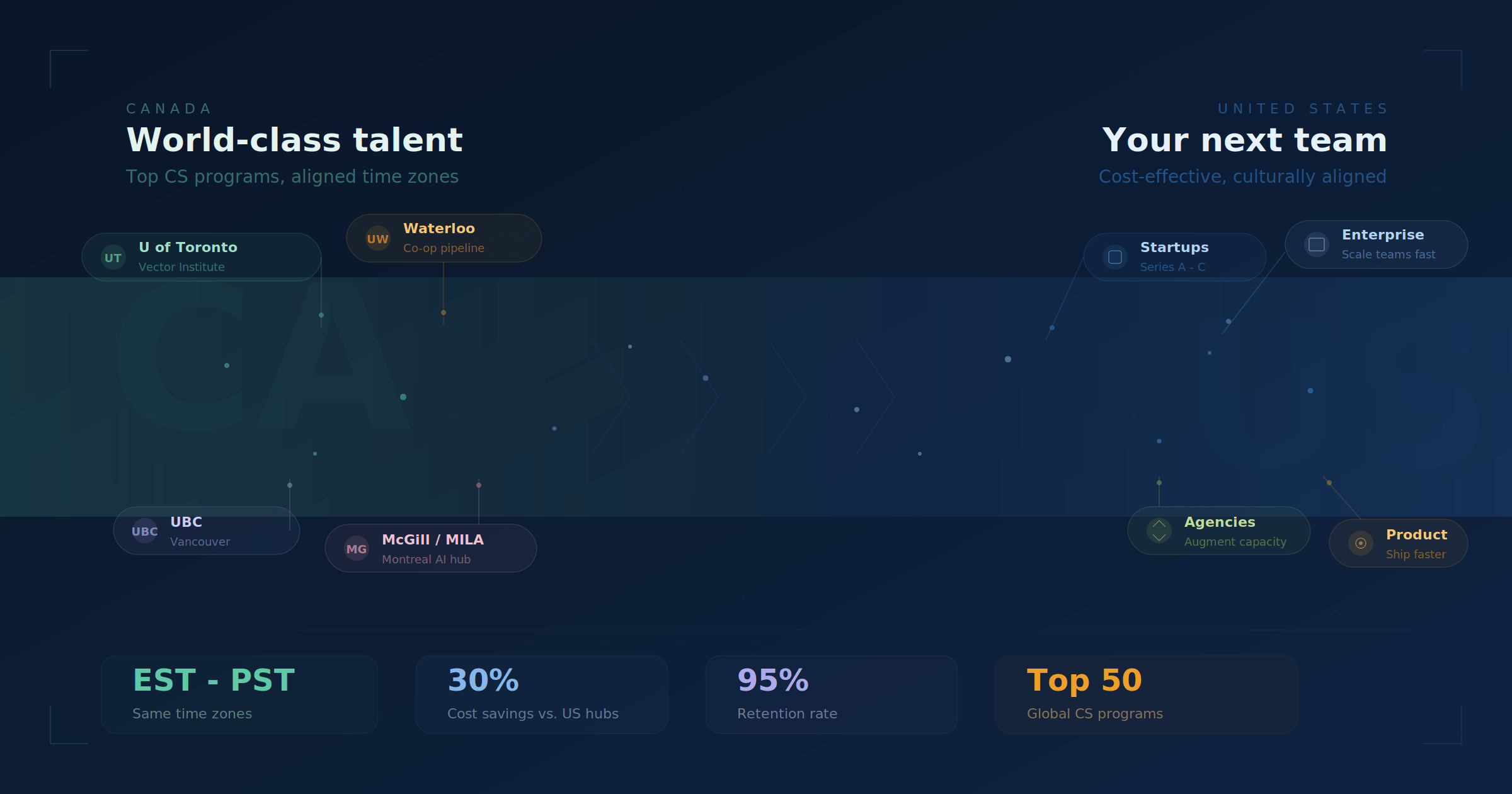

DecodeTalent’s 95% retention rate exists because the entire process is built around a different goal: long-term fit. Not speed-to-placement. Not keyword density. Fit.

This means screening for communication style as much as technical depth. It means matching candidates to teams where their working approach will complement the existing culture, not clash with it. It means being honest when a candidate is technically strong but wrong for a specific engagement. That last one sounds obvious. It is not how most agencies operate - because most agencies are optimizing for commissions, not cohesion.

The developers in DecodeTalent’s community also know they are being placed in roles where they can grow, not just roles that fill headcount. That produces a fundamentally different level of engagement than someone who took the first offer that landed in their inbox.

The Canadian nearshore advantage compounds on top of all of this. Same time zones as every US office - no scheduling acrobatics, no async lag on critical decisions. North American work culture alignment, which matters more than most hiring managers admit until they have managed across a 12-hour gap. And a meaningful cost efficiency compared to US hiring, without the hidden overhead that offshore arrangements quietly introduce through rework, miscommunication, and management time.

Research from Arnia Software in 2025 found that nearshore development outpaces offshore models by 25% in collaborative environments, and that coordination overhead in offshore arrangements erodes up to 25% of initial cost savings through rework alone. The math on offshore looks better until you account for everything the savings are funding.

The Question Worth Asking Before Your Next Hire

You do not need to rebuild your entire interview process to start vetting differently. You need to know whether what you have is measuring what you think it is.

Here is the question: if you ran your three strongest recent hires through your current process, would they pass?

If yes - you probably have solid signal in your filter. If you are not sure, or if the honest answer is probably not - you are screening for something other than the quality that actually stuck.

The Bureau of Labor Statistics projects 17.9% growth in software developer roles through 2033, against a talent supply that is not expanding fast enough to keep pace. In a tighter market, the companies that win on hiring are the ones whose vetting is precise. Not just fast. Precise.

The question is not whether a candidate can code. Given the maturity of AI development tools, that baseline matters less than it did. The real question is whether this person will still be here in 18 months, growing into harder problems, and making your team better while they do it.

If your current process does not reliably answer that question, it is worth a conversation.

Book a discovery call to see how DecodeTalent approaches technical screening - and whether pre-vetted Canadian developers are the right fit for your next hire.